|

|

| This is an simple "empty" scene, the camera is pointing at the end of a table. | The pixels processed in the Mog2 still change enough so flickering dots flash about. This was under a fluorescent light which might contribute to the noise, however, this can be easily be filtered out when grabbing contours by specifying a minimum value of pixes when identifying an object of interest. |

|

|

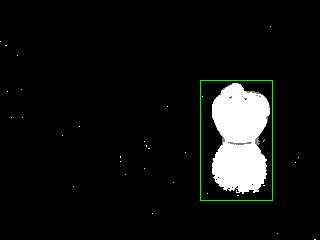

| A strawberry salt shaker is added to scene. | The Mog2 background subtraction does a nice job of isolating the object. The fact that the table is red does not seem to affect it. The pixel difference is so great that the salt shaker is very clear, but so is the salt shaker's reflection ;P |

|

|

| I got the FindContours filter working again (or at least partially) - this is what it finds with certain min / max pixel defintions filled. It has drawn a bounding box around the object & its reflection. As you would expect, it treats the object & its reflection as one object. | |

|

|

|

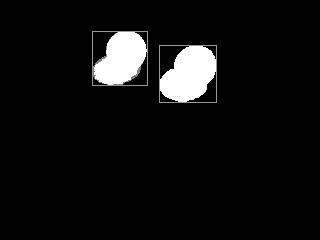

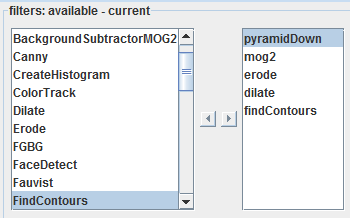

Here is another example with the following filters applied.

Erode and dilate clean up much of the noise. In this case I have moved the ball, and the mog2 filter picks up the object and the "hole" from which the object was moved. The system can tell pretty easily that the ball was moved, in what direction and with some calculations and some assumptions - the distance of movement. Next it would be nice to extract a template of the object of image - with a mask. This will help in the future for identification of a "pink ball" |

Now that the OpenCV service in MRL has been re-designed, it took only 15 minutes to add a brand new OpenCV filter. With the idea that InMoov will want to distinguish objects, I added OpenCV's BackgroundSubtractorMOG2 . The parameters are very simple and it has a "learning" switch. The learning switch tells the subtractor that everything is considered a background or it can be switched to say any new pixel deviation is now a "foreground.

The visual memory COULD possibly include a lot of information. Beginning with the raw image. We should add the FindContours bounding box. But there is so much more info to add.

- time

- position of self & object

- heading of self & object

- distance - base on a variety of strategies (position + heading + perspective

- inferred size

- material

- light source location

- ending or continuing of wall, cieling, & floor planes

- and lots of contextual info

References:

- Mateusz Stankiewicz excellent example

Wow! It is strange how well

Wow! It is strange how well the shadow is detected. Very cool though!

Ya, our brains kindof make a

Ya, our brains kindof make a point of disregarding shadows and highlighting, and typically filter out the "object".. If you draw or paint you might be more inclined to notice such things.. funny how noticing too much detail in the wrong context throws us off.

For example, the robot could/would see the object plus the reflection as a single object.. Trying to grab the thing on the right is challenging in that half of it is in the table (ouch) :P

I'm starting part of the visual memory design. And I thought this would be a good experiment if you want to play....

I want you to describe the picture (the colored one with the strawberry salt shaker) in all possible detail that you can... attributes, positions, materials, whatever, see what your carbon-based-bio-chemical-computer can do :D

Just so you know (in all fairness), I would probably NOT have guessed it was a salt shaker - I would have gone with just a porcelian figurine (never knew what it was till this morning), AND I would have guessed (looking at the picture) - it was a raspberry or tomato - not a strawberry. Interested to hear what you see..

Ya, our brains kindof make a

Ya, our brains kindof make a point of disregarding shadows and highlighting, and typically filter out the "object".. If you draw or paint you might be more inclined to notice such things.. funny how noticing too much detail in the wrong context throws us off.

For example, the robot could/would see the object plus the reflection as a single object.. Trying to grab the thing on the right is challenging in that half of it is in the table (ouch) :P

I'm starting part of the visual memory design. And I thought this would be a good experiment if you want to play....

I want you to describe the picture (the colored one with the strawberry salt shaker) in all possible detail that you can... attributes, positions, materials, whatever, see what your carbon-based-bio-chemical-computer can do :D

Just so you know (in all fairness), I would probably NOT have guessed it was a salt shaker - I would have gone with just a porcelian figurine (never knew what it was till this morning), AND I would have guessed (looking at the picture) - it was a raspberry or tomato - not a strawberry. Interested to hear what you see..

IMHO...

OK, first off you should know that I am color blind. Thats gonna skew the results a little. Here's what I can make out ( remember, the image is quite small and low-res on my monitor). There is a red shiny object resembling a strawberry (yup, with the green top "skirt" and stem, plus the dimples its a dead giveaway) placed on a red table top. There are highlights near the top center of the red body, broken up, indicating a dimpled surface. The light reflected appears to be coming from behind the camera and to the left...most likely from above camera height. The Strawberry has a flattened cylindrical bottom to stabilize it, of lighter color, and appears to sit nearly the same distance from both table edges slightly favoring the right edge, eclipsing the corner behind it. It casts a reflection toward the camera which fades off as the top of the strawberry rounds and curves in, and also casts a shadow behind at approximately 1 o'clock. Along the rear wall bordering the back of the table are two dark marks. one sits up slightly above the tabletop, while the other is larger and possibly partially eclipsed by the tabletop. Both seem to cast reflections toward the camera. To the left is a Pitcher, Vase or Lamp base. It seems to be green, and casts at least two shadows, indicating multiple light sources. The tabletop is reflected in the object, and it appears to be somewhat reflective. There is a highlight on the object 2/3 of the way up, possibly indicating a light source above camera height. To the objects right is another shadow cast on the wall (possibly two). This shadow extends from the tabletop, indicating a light source behind and to the left of the camera. The object casting this shadow is not directly visible. At the top of the green object , in the far left upper corner of the frame is a dark object. Possibly part of a lamp, it is difficult to identify. The tabletop itself is slightly reflective, and seem to be uniform in color. I cannot perceive a wall to the right of the table, but there may be one there. Hows this so far? What you are looking for?

I'm going to try that now!

I'm going to try that now!