Update: I'm kinda geekin' out about this right now... It looks like all those matrix multiplications do something useful...

Did a quick simulation with MRPT of what I believe is a resonable approximation of the InMoov arm.

So, I have been working a bunch trying to control the InMoov with a joystick, and its been difficult.

I was considering moving to an inverse kinematic model for joystick control, where the joystick will just move a point in space, and the inverse kinemetics can compute the proper joint angles.

In order to do this accurately, we need a model for the orientation of the gears (axis of rotation) and the length between the links (gears)

In the 1950's some researchers named Denavit and Hartenberg came up with a set of minimal parameters to describe the degrees of freedom in a robotic arm. The math can get pretty scary, but once the parameters are known and accurate, you can percicesly move the hand (end effector) of the robot to and point in space (that the robot can reach) very easily.

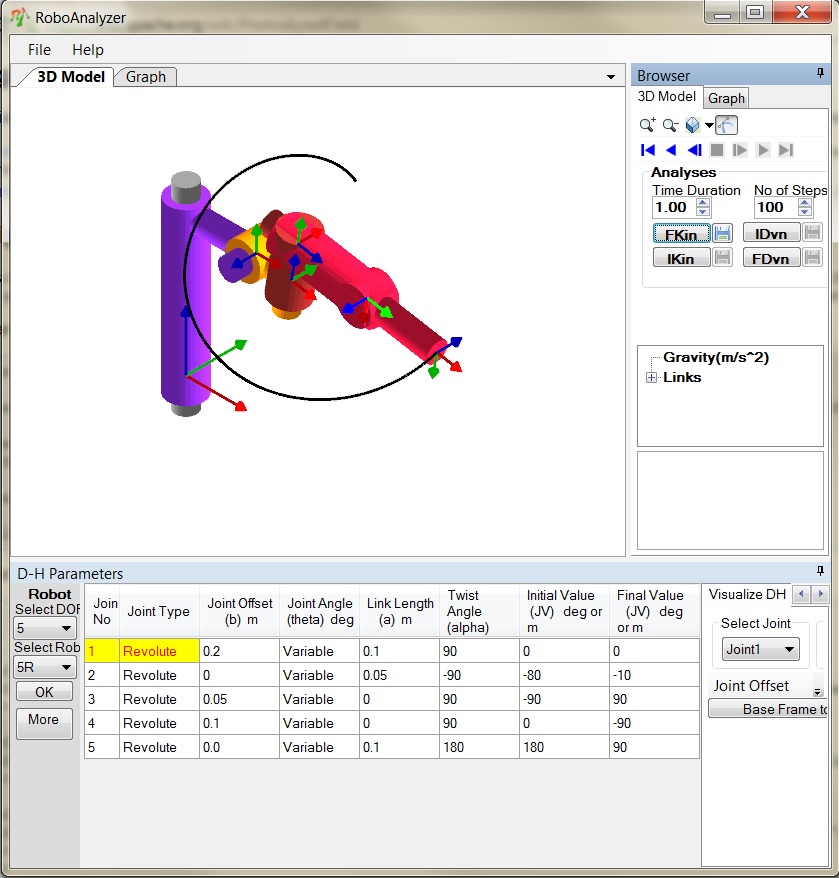

I found a program called RobotAnalyzer that allows you to play around with creating these D-H parameters and you can simulate the arm movements. My goal is to come up with a complete set of (reasonably) accurate D-H parameters to be able to control the inmoov hand.

The good news is, once we have these, we can build full kinematic models for the robot, including inertia, force, rotational momentum. Once we know all of those things we will be well on our way to creating control systems to keep the InMoov balanced as it takes it's first steps (when we finally have legs!)

Each Joint Angle is described by 4 parameters. (From the youtube video below, here's the description of them)

Two of the parameters are lengths, and 2 of the parameters are angles

- d - the "depth" along the previous joint's z axis

- θ (theta) - the rotation about the previous z (the angle between the common normal and the previous x axis)

- r - the radius of the new origin about the previous z (the length of the common normal)

- α (alpha) - the rotation about the new x axis (the common normal) to align the old z to the new z.

In addition to getting the inverse/forward kinematics correct, having the D-H parameters allows us to properly compute the forces and torques experienced by the robot.

The Borg Mothership has been scouting for candidates to assemilate to support these sorts of simulations/calculations. So far it's been pretty sparse. The borg is looking for a java based kinematics package that has support for D-H parameters.

- http://www.nondot.org/sabre/Java/GRAS/ - Written for Java 1.0 opensource

- http://www.cs.cmu.edu/afs/cs/academic/class/15494-s09/final-projects/2009/dhwizard/index.html - source available, was a research project.

References:

- http://en.wikipedia.org/wiki/Denavit%E2%80%93Hartenberg_parameters

- http://www.roboanalyzer.com/downloads.html

- https://www.youtube.com/watch?v=rA9tm0gTln8

- Measurements of InMoov

- JavaFX Tutorial

- JNI Library with IK

- http://planning.cs.uiuc.edu/web.html

- http://robotics.stanford.edu/~lsentis/files/icra-2006.pdf

- http://www.mrpt.org/

UPDATE:

Recently added are two new classes in MRL, DHLink and DHRobotArm. A DHLink is a object that takes these 4 parameters (DH parameters) . D, R, Theta & Alpha. The DHRobotArm is built up of a list of DHLink objects. (class name subject to change).

The DHLink can compute the heterogenous transformation matrix for the current state of that link. The heterogenous transformation matrix is a 4x4 matrix that will perform translation and rotation on a point in space with respect to the previous reference frame.

The cool thing abou tthese transformation matricies is that you can multiply them together to yeild a transform that represents a robot arm.

The transformation matrix gives us some information all in 1 shot. It give us x,y,z position of the hand in 3D space. It also can (with a little extra work) provide us with the orientation of that point (rotation about the x,y, and z axis ) in terms of pitch, roll, and yaw.

So, now the beauty is, if we have accurate DH params for the joints of the InMoov, we can compute the hand position and direction accurately.

For reference, here's the transformation matrix.

Cool ! so this could help

Cool !

so this could help with the kinect shoulder problem, you think? Or is it pretty much a simplified version of the InMoov virtual to be used for learning the coordinates.

One step towards a solution

Yup, one of the intended uses of this is the kinect accuracy problem. The first part is to be able to compute the current position of the arm given the current joint angles. That's what the forward kinematics gives us.

So, now we know the x,y,z and orientation of the hand for any given set of servo angles. Next is to understand, if you move each of those angles, how do the x,y and z positions of the arm change. (this is what the "jacobian" matrix will give us.. (not done yet))

The goal is, pick a point in space (x,y,z) and tell the arm to move to that point. As for the kinect, we can just track where the hands are, and have the arms automatically compute the joint angles to put the inmoov hand where the kinect hand is.

More to come!

Awesome post kwatters. The

Awesome post kwatters.

The "State" information is showing the matrix values for the highlighted XYZ of joint 5 as it changes?

Are the lengths proportional to InMoov - to me its seems like the double omoplate shoulder joint is longer - but maybe this is an illusion because of the various coverings on InMoov - and struturally its proportionally accurate?

I'd like to try modeling what you done with a Blender output - (when I get back to that :)

InMoov Arm Kinematics and Inverse Kinematics

Have you done with kinematics and inverse kinematics of InMoov? Is there any online code for that? I have used RoboAnalyzer as a simulation, but for the real deployment of kinematic algorithm on the real robot, what should be done?